Many database systems provide sample databases with the product. A good intro to popular ones that includes discussion of samples available for other databases is One trivial sample that PostgreSQL ships with is the. This has the advantage of being built-in and supporting a scalable data generator-you can make databases of any size ranging from 16MB to 600GB (approximately) with the current version. PgFoundry Samples The latest collection of PostgreSQL compatible database samples is at. It includes three commonly used benchmark databases:. World: Based on the sample. Has a list of Cities, Countries, and what language they speak.

dellstore2: PostgreSQL port of a database-neutral e-commerce test application. The original code supports three size scales in their data generator (10MB, 1GB, 100GB), currently only the normal, smallest sized data set has been ported to PostgreSQL. Uses this test data to show some advanced queries. Pagila: Based on MySQL's replacement for World, which is itself inspired by the Dell DVD Store. There are some other sample databases there as well, such as a USDA Food database and a large list of country data via ISO-3166 standards.

Other Samples. from has details of land sales in the UK, going back several decades, and is 3.5GB as of August 2016 (this applies only to the 'complete' file, 'pp-complete.csv').

No registration required. Download file 'pp-complete.csv', which has all records.

I recently had to work with some data that came in a huge Microsoft Access database. Because I like SQLite (and despise Access), I’ve decided to export the data to an SQLite file. The first thing I needed to do was to somehow get all the data out of the db.

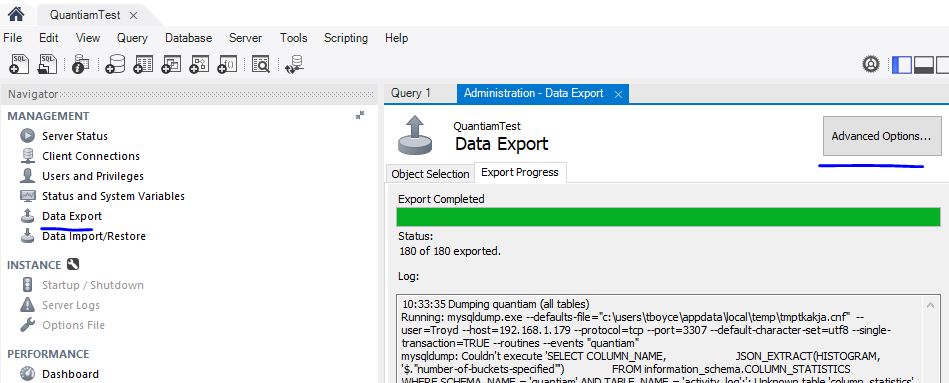

Pg_dump is an effective tool to backup postgres database. It creates a *.sql file with CREATE TABLE, ALTER TABLE, and COPY SQL statements of source database. To restore these dumps psql command is enough. Using pg_dump, you can backup a local database and restore it on a remote database at the same time, using a single command.

Being a Linux user, complicates things a bit, but thanks to it’s possible to process the.mdb files without resorting to Windows and buying Access. Using mdb-tools directly can be tedious if you want to export a large db with multiple tables, so when I’ve looked for a way to automate it, I came across. This post shows a nice script that dumps every table in a.mdb file to separate CSV file. While useful, I wanted something that I could easily import into SQLite. So I’ve modified their script to generate an SQL dump of the db. Given a db file, it writes to stdout SQL statements describing the schema of the DB followed by INSERTs for each table. Actually because mdb-tools doesn’t support SQLite as a backend, the dump uses a MySQL dialect, but it should be fine with SQLite as well (SQLite will mostly ignore the parts it can’t process such as COMMENTs).

The easiest way to use the script is. #!/usr/bin/env python # # AccessDump.py # A simple script to dump the contents of a Microsoft Access Database. # It depends upon the mdbtools suite: # import sys, subprocess, os DATABASE = sys. Argv 1 # Dump the schema for the DB subprocess. Call ( 'mdb-schema', DATABASE, 'mysql' ) # Get the list of table names with 'mdb-tables' tablenames = subprocess.

Popen ( 'mdb-tables', '-1', DATABASE , stdout = subprocess. Communicate ( ) 0 tables = tablenames.

Splitlines ( ) print 'BEGIN;' # start a transaction, speeds things up when importing sys. Flush ( ) # Dump each table as a CSV file using 'mdb-export', # converting ' ' in table names to ' for the CSV filenames. For table in tables: if table!= ': subprocess. Call ( 'mdb-export', '-I', 'mysql', DATABASE, table ) print 'COMMIT;' # end the transaction sys. Flush ( ) #!/usr/bin/env python # # AccessDump.py # A simple script to dump the contents of a Microsoft Access Database. # It depends upon the mdbtools suite: # import sys, subprocess, os DATABASE = sys.argv1 # Dump the schema for the DB subprocess.call('mdb-schema', DATABASE, 'mysql') # Get the list of table names with 'mdb-tables' tablenames = subprocess.Popen('mdb-tables', '-1', DATABASE, stdout=subprocess.PIPE).communicate0 tables = tablenames.splitlines print 'BEGIN;' # start a transaction, speeds things up when importing sys.stdout.flush # Dump each table as a CSV file using 'mdb-export', # converting ' ' in table names to ' for the CSV filenames. For table in tables: if table!= ': subprocess.call('mdb-export', '-I', 'mysql', DATABASE, table) print 'COMMIT;' # end the transaction sys.stdout.flush.